Here’s a post from one of my Kaggle competitions, the State Farm Distracted Driver Detection challenge. It’s a very interesting challenge, one that pits computer vision and foolish drivers who even had the guts to text on the phone while driving.

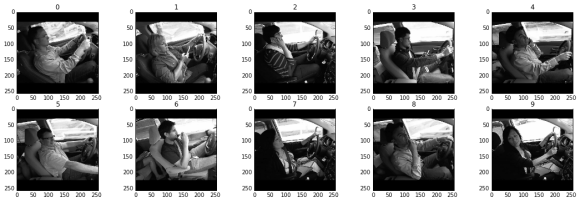

Well, technically, these drivers aren’t really doing anything risky. These are artificial images made while being pulled by a truck. Still though, it’s an interesting and very important problem that needs to be solved. Granted that we won’t be seeing even half of all cars becoming self-driving globally, we should at least offer ways to safeguard from these risky types of behaviours. These distractions are the different classes of these competition, namely: safe driving (0), texting using the right hand (1), talking on the phone using right hand (2), texting using the left hand (3), talking on the phone using left hand (4), operating the radio (5), drinking (6), reaching behind (7), adjusting hair or applying makeup (8) and talking to the passenger (9). The dataset numbers ~20k for the training set and nearly 80k for the test set.

The competition is still ongoing, so I won’t post my solutions yet. But I do have something for those that are having problems with datasets as large as these that it won’t fit in memory. A solution comes into mind. Get a batch as large as possible then feed them into an online classifier for only one epoch. Then get another. Repeat. This works in practice, but we could do better. Reading from disk takes a long time, sometimes even with SSD’s installed. Therefore we have to amortize the cost of reading from disk, since solving that will solve one part of the problem — of course the other half is to build a fast online classification algorithm.

I’ve scoured far into forums to see a solution. Turns out, we bump to our old friend concurrency. See, we could create two groups of independent processes, the first being the readers, and the second being the consumers. The second one, as you would have guessed, is the algorithm that learns the decision function using the data from the readers. Connect them together with a queue, then add some semaphores into the mix, and we have our publisher-subscriber pattern all over again, but now applied to hardcore machine learning.

First off do a generator. A generator in python is something as simple as the following:

def myGenerator(file_path):

with open(file_path) as reader:

for line in reader:

X, y = line.split(":")

yield X, y

This function just reads a file line by line and outputs an X, y value based on the “:” delimiter. What’s special about this is that you can now “generate” values off of this function, such as in a loop:

for X,y in myGenerator("data_file.csv"):

print X, y

And finally, the secret sauce. The “reader process” that could generate the data for us via a queue could be implemented also as a python generator! What’s better is that we could chain generators together in a kind of a decorator pattern we see in software engineering. See this:

def threaded_generator(generator, num_cached=50): import Queue queue = Queue.Queue(maxsize=num_cached) sentinel = object() # guaranteed unique reference # define producer (putting items into queue) def producer(): for item in generator: queue.put(item) queue.put(sentinel) # start producer (in a background thread) import threading thread = threading.Thread(target=producer) thread.daemon = True thread.start() # run as consumer (read items from queue, in current thread) item = queue.get() while item is not sentinel: yield item queue.task_done() item = queue.get()

Note that we could have as much producers as we want, if we want to maximize our CPU.

Then we chain our two generators via:

file_gen = myGenerator("data_file.csv")

threaded_gen = threaded_generator(file_gen, num_cached=50)

for X,y in threaded_gen:

print X, y

With this, I’ve shaved off some precious seconds for reading from disk! That’s very precious since we’re talking about some thousands of iterations here. That’s potentially some tens of thousands of seconds saved.

That’s a little boost for detecting distracted drivers with machine learning. Hopefully you can use them too in your own projects. Once the competition is over, I’ll post my humble solutions.

You can see the original posts I’ve found here, and here. Thanks a lot you guys.