Autoencoders are a simple neural network approach to recommendation

Autoencoders are a simple neural network approach to recommendation

Variational autoencoders can discover unique ways to encode-decode the input from a distribution. Bonus: It can generate images!

Back in college, I did a class project where I used computer vision techniques for the first time. It was the age before deep learning. Today, I want to revisit this old project.

In this video, I’ll discuss my solution to the SIIM-ISIC Melanoma Classification Challenge hosted in Kaggle.

Hi all! I’m trying out this new format of publishing my projects through Powerpoint videos. Recording this way is fun. Let me know if this is a better format for you. This pet project is my tribute to books. As a machine learning person, I’d like to see if I can artificially create images of […]

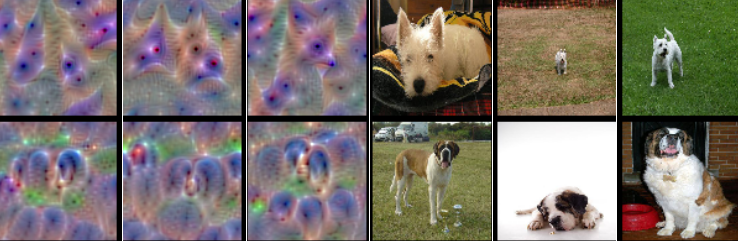

Time for another vision blog. Here, I’ll talk about dogs. Humans can identify a dog from a cat well enough, but it’s a little harder to distinguish specific dog breeds. For instance, can you tell which is which in the following? Well, on the left is a Siberian Husky and on the right is an […]

Hi! This is my first attempt to write a Jupyter notebook to wordpress. Jupyter notebooks are an increasingly popular way to combine code, results and content to one viewable platform. Notebooks use ‘kernels’ as interpreters to scripted languages. So far, I’ve seen Python, Julia and R kernels here. (The full notebook is over at GitHub. […]

A blog post on the distracted drivers kaggle competition.

Another interesting thing about deep learning is, being inspired by neural networks, it is similar to how our brains work. Our brains have different areas for different stimuli, and our neurons combine these signals hierarchically.