Time for another vision blog. Here, I’ll talk about dogs. Humans can identify a dog from a cat well enough, but it’s a little harder to distinguish specific dog breeds. For instance, can you tell which is which in the following?

Well, on the left is a Siberian Husky and on the right is an Alaskan Malamute. Malamutes are larger than the husky, although it’s a little hard to tell in pictures. In the above case, it’s the tail. A husky’s tail hang down while a malamute’s is curled upwards. That’s overly specific, yeah? What about the next?

On the left, we have a Boston Terrier and on the right, we have a French Bulldog. Both share a common ancestor, the English Bulldog. Besides that Boston Terriers stand taller and the French Bulldog packs more muscle, there is the more obvious difference of the tuxedo-like markings on the Boston Terrier.

Sounds like a trained human could spot the difference between dog breeds. Can a machine learn to do the same too? What does it see when looking at dogs?

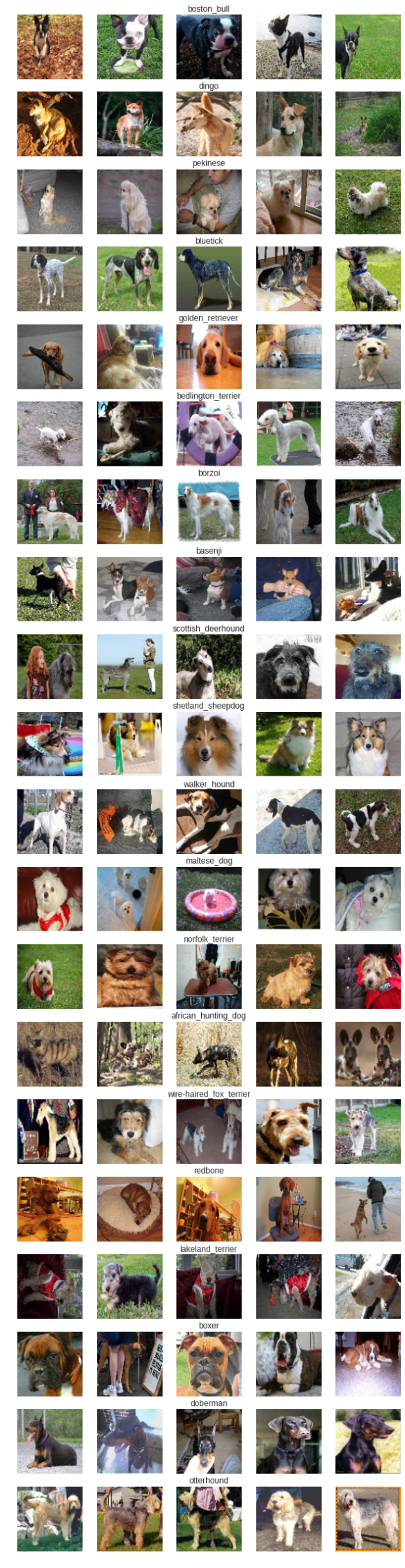

For reference, here’s our dataset: 120 different dog breeds with some 60 to 100 pictures for each. This is the link to the original.

Here’s a look on some 20 dog breeds. Are you feeling doggone amused now?

How did we do?

I’ll be using a pretrained VGGNet model for this one since this has been trained on a larger collection of images, ImageNet. One can already achieve good results in using an off-the-shelf model and just modifying its top layers. This makes the convnet act as a feature extractor which I’ll show later. I’ve also enabled learning of the topmost convolutional block layers to enable better transfer learning of this specific dog-main.

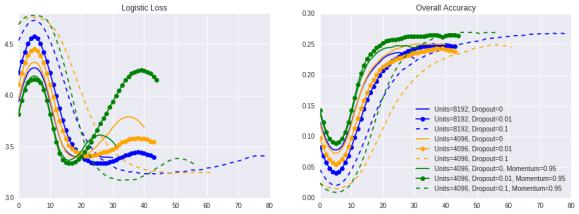

And looks like it has learned something. I’ve tried a couple of hyperparameters and architectures and came up with the following results. I’m picking up on the Units=4096, Dropout=0.1, Momentum=0.95 (green dashed trend line) to be a useful candidate for further analysis.

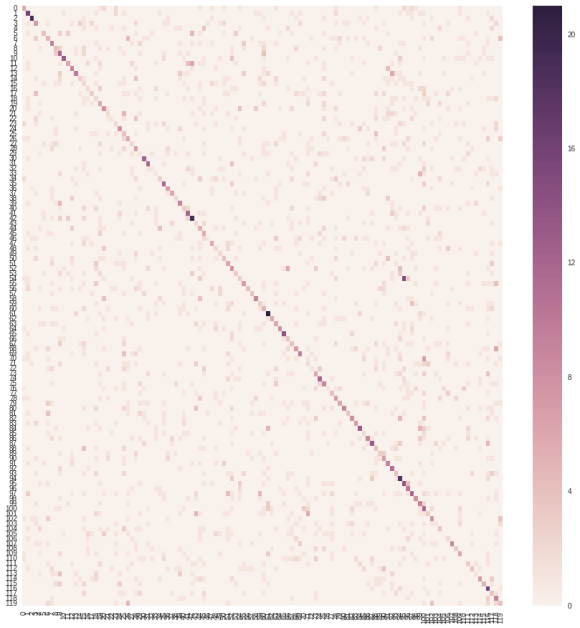

Here’s the confusion matrix (it’s a large one!). Although the diagonal (the correct ones) is distinct, the off-diagonal cells (incorrect ones) could be improved.

So overall, we estimate around ~3-3.5 logistic loss and an accuracy of ~25% for a dataset with 120 classes. Accuracy’s not bad but the loss could be better. I’ve checked on the leaderboard of this competition, and surprisingly, the winners had 0 log loss (wow). They got it perfect. So there’s definitely better models than mine, but this works well enough for the next stage… Dog Dreams!

What does it see when looking at dogs?

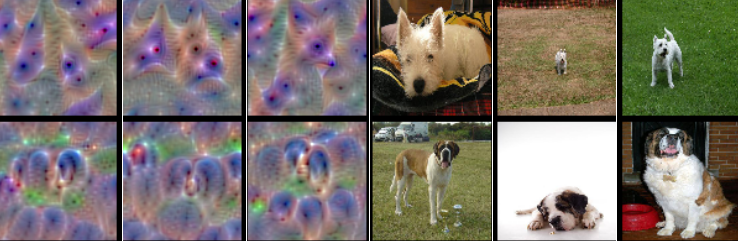

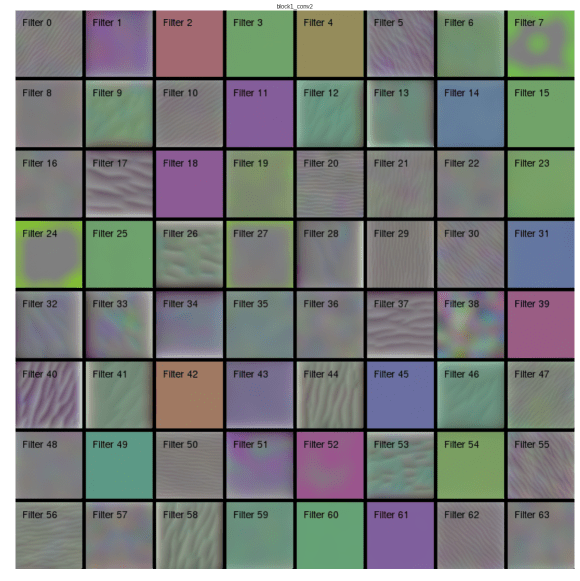

ConvNets have learned filters which convolve over input images. These layers constitute the feature extraction stage. It turns out that you can get a good feel of what image features the filters look for if you feed a random image and maximize its activation through a process called gradient ascent. Using the keras-vis library, I’ve achieved the following filters.

The lower filters always search for low level features such as horizontal or vertical lines and shades of color.

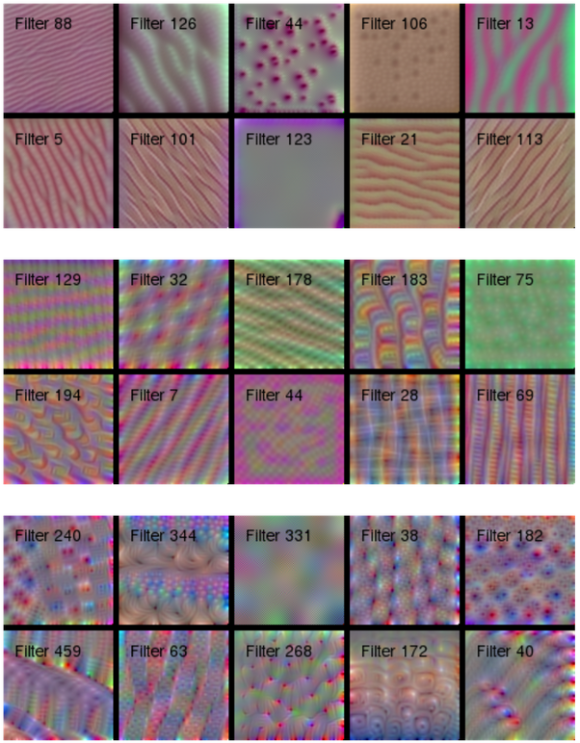

These progressively gets more complicated as the network combines the different filters. The next layers’ filters are a sight to behold.

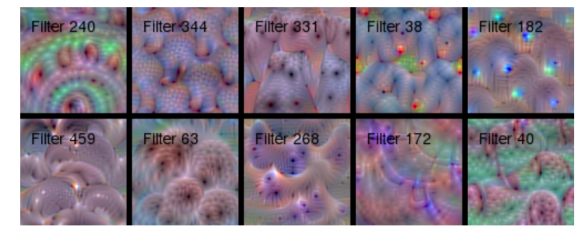

And finally, the topmost convolutional layer (which I enabled to learn the new domain) begin to resemble some dog features. I think I see fur features, and some snouts. And a lot of eyes. Decently trippy.

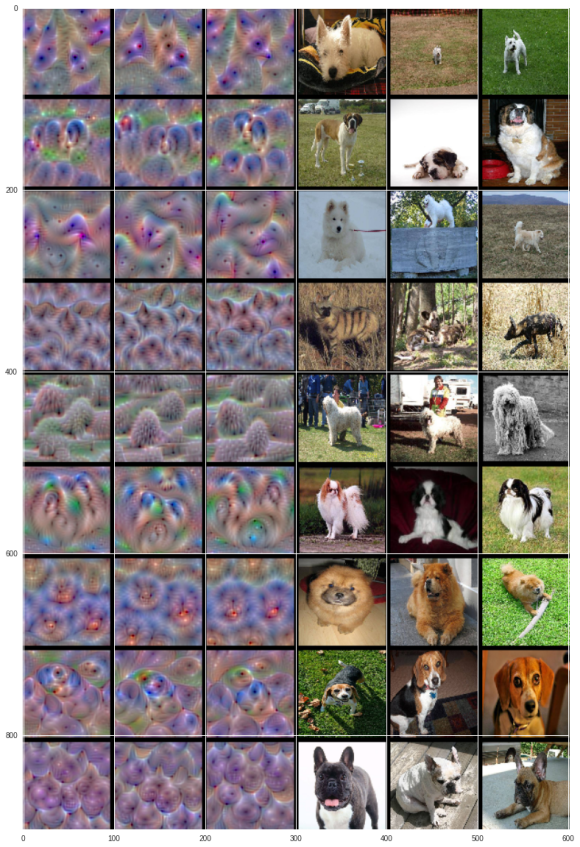

Even trippier images appear when you feed a random image to the prediction layer and maximize a class’ activation. This gives us a look on what the network searches for given a dog breed. The first 6 dogs give the best precision, recall and F1 while the last three are somewhere in the middle of the pack.

In order, we have the West Highland White Terrier, Saint Bernard, Samoyed, African Hunting Dog, Komondor, Japanese Spaniel, Chow Chow, Beagle and the French Bulldog.

It looks so awesome! The angular face of the Terrier is clearly seen on different orientations. There’s the plump face of the Saint Bernard and the fluffy head of a Samoyed. The stripes of the African Hunting Dog could clearly be seen while the dank dreads of the Komondor begs to be noticed. The Japanese Spaniel’s body type is seen, while the rotund face of a chow chow is adorable. The innocent eyes of a Beagle is noticeable while for French Bulldogs… There seems to be berries. Or a collection of snouts. You tell me.

And that my friends, is how machines look at dogs. Perhaps also that’s how our brain works somewhere. We look at tails, head and body shapes and fur to distinguish dog breeds. Only in the case of machines, it’s straight up psychedelic.