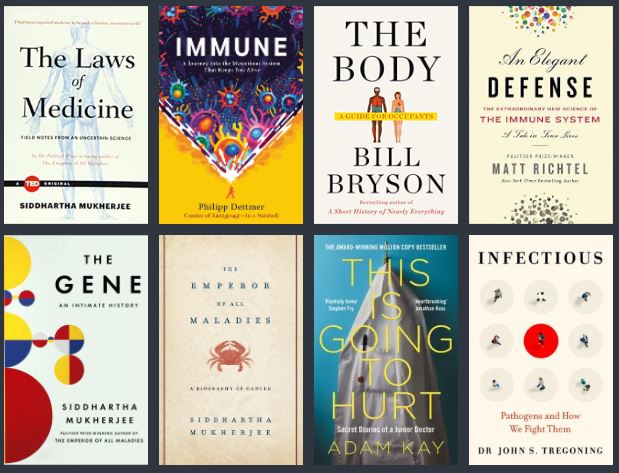

For those that have known me for some time, I like reading medical themes in books, articles, and Youtube series. This is somewhat far apart from my profession but I think having this kind of interest is healthy (get it?). The above are some books that I have enjoyed recently.

Eventually, I came to reach some parallels between my discipline and the medical sciences. Like the immune system behaving like a microservices architecture where there is no centralized control — only an emergent system with local inputs and outputs. Or genes whose pathways to different human traits and conditions are so complex, similar to a GPT3 with 175 billion parameters.

One thing I have been thinking about a lot is how can medicine inform the future of AI. The former is a far-reaching practice with a trajectory of stagnancy, horrifying practices, reinvention, and continuous development. The latter is also a far-reaching practice but does not have the benefit of centuries of learning.

In the next few, I want to structure my writing to outputs, inputs, and processes. Very software engineering-like, I know. Also, I’ll transition to using the term “machine learning” instead, since AI may be too big of a term. Machine learning (ML), as a subset of AI, is the body of work related to learning useful programs from large datasets.

Disclaimer: I’m a machine learning practitioner, so please don’t take any of my statements as completely equivalent to anatomical facts and prevailing medical opinion. Also, the blog does not contain medical advice, nor does it mean to be medically accurate.

Outputs

It is clear as day — ML will be more ubiquitous. Its outputs will be more widespread, and its effects on our daily lives will even go deeper than Instagram filters. Aside from the hype that is self-driving cars, and fully automated customer service, one has to consider the systems that these new technologies will affect. So I bucket these trends into the “micro” and “macro” perspectives.

In micro-terms, small systems such as houses and warehouses will be transformed. Amazon has been doing the latter, and others in the industrial space will follow. In macro-terms, large systems such as entire ecosystems and entire cities will be modeled and managed. On that end, I’m proud of my Filipino compatriots who have won awards from NASA for their work in doing epidemic and mobility models using satellite imagery.

In medicine, the outputs are also promising. Personalized medicine, the intersection of healthcare and genetics (and commercial interests — Apple Watches, looking at you), is prominent in the current media. But from my reading, machine learning and statistics could also play a major role in detecting insurance fraud, healthcare overtreatment and undertreatment, doctor burnout, and stress in the healthcare system in general. This is Going to Hurt made that especially clear.

Inputs

Big hardware. Big models. Big data. That has been the trend that skyrocketed deep learning. Coinciding with global digitalization and serverless applications in the cloud age, practitioners can rely on an ever-increasing amount of data at a downward cost trajectory. But in recent years, several machine learning heavyweights have called for a reevaluation of methods — most notably Andrew Ng. It is not always every day that people can come up with big data. As he quotes,

When you have relatively small data sets, then domain knowledge does become important. [AI systems should have] two sources of knowledge – from the data and from the human experience. When we have a lot of data, the AI will rely more on data and less on human knowledge.

Andrew Ng, in an interview with Venturebeat

In medicine, human experience is encapsulated in large medical ontologies. The Unified Medical Language System is one such database, which brings together different related fields in medicine. That is the basis of many expert systems that are also boosted by advances in natural language processing.

I believe that we should have the know-how to accumulate and process this kind of “small data”. Symbolic AI — the good old AI — can offer a path forward. It’s another reimagining of machine learning, and it can potentially be one framework for explaining our explainability problems.

Processes

Speaking of explainability, I believe it is the most important issue we ML practitioners have to face. It all boils down to the issue of trust. In the long centuries of medicine, improving trust has been the focus of the patient-doctor relationship. Trust should also be a guiding principle for building ML systems. The ML community should focus on some career paths to establish trust. I put forward several roles that mirror some healthcare roles.

Operations focused on ML Outputs

This is related but a bit different from the rising trend of MLOps. These are analysts or representatives that understand machine learning and possess tools that provide solutions when systems fail spectacularly. They are like the Emergency Medicine folks of machine learning. Sometimes, the quickest possible solutions should be done. Analysts can spot egregious recommendations (e.g. softcore porn in general audience sites), hotfix computer vision errors due to race (e.g. ID photo proofs in mobile apps) and report funny things happening with the chatbot (remember Tay?). In the deeper back-end, engineers should be on the lookout for data drift and model drift, and ML-system-related incidents.

When systems go bad, this role embodies the paramedics that quickly go where they are needed. In our paradigm of building trust, they are the service-recovery type of job. However, we shouldn’t be so reactive. They should also compile their learnings, which should eventually reach the…

Machine Learning Designer-Auditor

This is something like a healthcare administrator, which the hospital relies on for efficient care. In ML terms, they establish trust in the product. They learn from users and collect the learnings from Operations to form a product that provides the best experience possible. This is far from easy, since the data inputs earlier may have significant biases that can only be fixed by careful curation and annotation. How the output is used may also be reevaluated. For example, instead of directly serving recommendations, designers can create candidate filters (eliminate questionable content), or even “pop” the filter bubbles (diversify news topics, for example).

This job calls for beyond-accuracy metrics — fairness, usability, impact. Metrics that are not easily quantified, but are very critical. In our earlier “emergencies”, the softcore porn may be due to clickbait images, which resulted from a model keen on exploiting clicks instead of duration watched, or length of reading time. The input data from our proof of ID capturing app may have lacked images of people of color. The chatbot can’t sufficiently understand a foreign language it purports to understand because of a regional dialect.

I am reminded of Siddharta Mukherjee’s Third Law of Medicine. “For every perfect medical experiment, there is a perfect human bias”. In our quest to achieve maximum accuracy, we engineers might have forgotten our biases.

Machine Learning Ethicists

Where designers focus on the product, ethicists focus on the population. A good metaphor can be a public health officer, where diseases are monitored over time and space. Designers build trust in the product, while ethicists build trust in the entire field. A very tall order, but one that needs to be done. We need more ethicists like Timnit Gebru, who, with her colleagues sparked a debate with Google over questions of bias, environmental concerns, and explainability.

There are some prominent examples of applications that have far-reaching consequences if something fails — surveillance data, company hiring, and credit scoring. And what if it’s too successful? The Cambridge Analytica stories have revealed publicly the potential of ML to not only influence microsegments of society, but it has also polarized entire populations. Like cancerous cells, ML-generated fakes are a destructive mirror of our own cognitive biases and one that continues to multiply and invade. Truly, the lines between targeted marketing, fake news, and PSYOPS have blurred together.

Clearly, a lot of work needs to be done in this area, especially in communications. Should we have a CDC-like organization? Yes, I believe so — similar to how NIH was expanded in the 80s through the activities of volunteer groups building up support from the ground up. That’s why ethicists should be good educators, able to share their discoveries, and their recommendations so we can incrementally move forward and build trust in AI. But it should not be their task alone. Which is why…

Every Practitioner Needs to Evolve too

AI professionals have to contribute as well to promote trust in the field. I can think of two ways: Creating a code of ethics for ML and public education.

A code of ethics will serve as the guiding framework for all ethical ML activities. A code of ethics is elusive because of philosophy and implementation. We can look to medicine for some inspiration. To redress massive wrongdoings in the Second World War, the Declaration of Geneva built on the Hippocratic Oath and put it in the context of the modern world. Following gross injustices in clinical research, the Belmont Report outlined the principles of human subjects. Back in ML, I’m not sure if the Cambridge Analytica stories have become a watershed moment or if it will be a missed opportunity for change.

As for implementation, in medicine, there are boards of physicians that regulates issues of malpractice, but it is not straightforward port to ML and AI, let alone its very adjacent field, software engineering. Note that there is a code of ethics in ACM but unfortunately, not too many institutions abide by this or even inform their workers. In medicine, there is the 4 pillars: beneficence, non-maleficence, autonomy, and justice. Sometimes, these conflict with each other, but there is a mature legal system for grievances. It’s not so clear in ML, at least, not yet. This is why we need more people in the conversation.

Public education will go a long way to start and continue the conversation around ML topics. I hope that our computer literacy subjects in primary and secondary schools cover the expanding influence of ML. It can introduce our youth to the subtle ways the Internet operates. For the more mature, I recommend efforts like Crash Course Artificial Intelligence to expand the layperson’s understanding.

We should expand the conversation to be as inclusive as possible, keep talking, start doing, and continue reviewing. Like medicine was in ages past, AI will have a long road ahead of it. Although, I hope we can end the ‘digital dark ages’ early and go ahead with reinvention and continuous development.

Thanks for reading!